Use of Artificial Intelligence (AI)

AI and language services

These days it’s almost impossible to escape the AI hype machine. The “artificial intelligence” label is currently getting slapped onto everything. Even my local radio station’s traffic report is now allegedly enhanced by “AI.” (Spoiler alert: It’s still only semi-reliable and heavily depends on humans reporting traffic jams and delays.)

Unfortunately, quite a few language service providers – including big agencies and freelancers – are fully onboard with the hype. Outlandish claims abound. The misleading inevitability myth flourishes. And just like more and more AI slop is ruining the internet, the quality of translations is trending toward mediocrity.

You deserve better. Therefore, this page clarifies what this is all about and explains how I do and do not use “AI.” For any further questions, you’re welcome to get in touch via my contact form.

The short version: I am not opposed to modern technology, but I do not use “generative AI” and similar systems that put the confidentiality of your content at risk and compromise the quality of my work.

What is “artificial intelligence”?

This is the million-dollar question – and there is no clear answer. That’s because the term “artificial intelligence” doesn’t have and never had a precise definition. It was coined in 1956, in the middle of the Cold War, by researchers organizing a workshop at Dartmouth College and hoping to attract not just interest from fellow scientists but also funding from the government and private foundations. In other words, the term “artificial intelligence” has been around for decades and serving marketing interests right from the start.

This is why I often put this term into quotation marks. “AI” is just a shallow buzzword, an umbrella term. But to have at least a basic definition, we can say: “Artificial intelligence” refers to computational systems (machines, computers, robots) that can analyze and learn from data (in supervised or unsupervised ways) in order to perform tasks that normally require human intelligence (reasoning, generalizing, making decisions).

A wide range of methods and technologies are now habitually lumped together under this umbrella term, from classification algorithms and cluster analyses to natural language processing and computer vision to complex neural networks and deep learning. Some real-world applications based on those methods are quite useful, and I wouldn’t want to live without them. Optical character recognition helps me digitize paper documents. Spam filters spare me a lot of frustration even when they don’t work perfectly. Sensors in my car remind me of speeding limits in case I overlooked a traffic sign.

The problem with “generative AI”

The examples mentioned above make my life easier or less expensive. And in many cases (excluding the military-industrial complex and related scenarios), using such systems and tools isn’t much different from using a calculator or a computer. But there is one type of “AI” that is problematic by design and comes with a devastating bottom line: generative artificial intelligence (GenAI).

In simple terms, these systems ingest a massive amount of training data to learn patterns, then store those patterns in the form of millions of parameters and generate new content based on those parameters and specific prompts with instructions. To be more precise, the problem is not with this approach per se (because it can be useful) but with its use in inappropriate ways where other techniques – or no “AI” at all – would be the better choice.

This is the type of automation that has led to all the current hype. And again, it’s not about some benevolent goal or real progress but about marketing, greed, and power. Of course, the con men fueling the hype (and yes, most of them are men) will claim otherwise and promise you the moon.

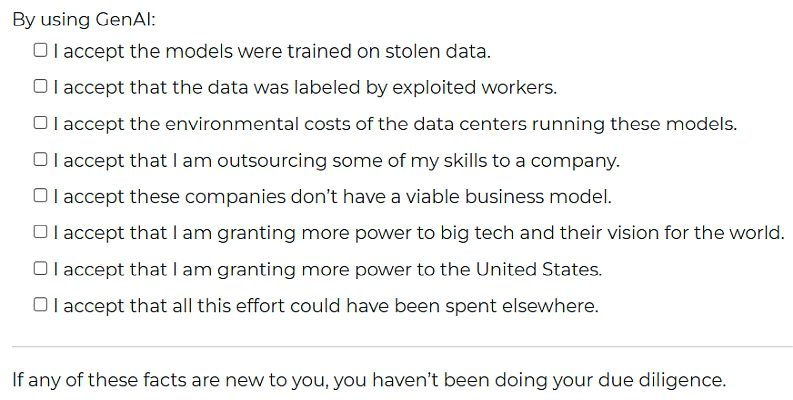

But at the end of the day one truth stands behind their lies: Morally corrupt corporations such as OpenAI, Anthropic, Midjourney, xAI, Microsoft, Google, Nvidia, and a few others are systematically stealing and misusing copyrighted material, building their systems on the backs of exploited workers, recklessly poisoning communities and ignoring the will of local residents, aggravating the climate crisis with the aggressive water consumption and energy-intensive processes of data centers (concealed from the public through greenwashing and non-reporting), abetting the destruction of information ecosystems while making us dumber, enabling violence (including mass shootings, non-consensual deepfake pornography, and teen suicides), and moving billions of dollars in circles from A to B to C and back to A, trying to keep the giant bubble afloat just a little bit longer until it will burst and hurt everyone but the tech billionaires.

My approach to “AI” and “GenAI”

As you can tell, I’m not a fan of unregulated and harmful “generative AI.” There is no ethical use of ChatGPT, Claude, Gemini, and similar models because it’s not just a few rotten apples amidst useful tools – the whole barrel has been spoiled, the whole infrastructure is based on exploitation and abuse.

As long as Silicon Valley and tech bros around the world keep breaking things, with regulators looking on due to ignorance and/or incompetence, it is upon users, consumers, and businesses to make informed decisions and reject these destructive systems. And why would you even want to use them? More and more studies confirm that “AI” doesn’t deliver any significant productivity boosts and makes employees drown in “workslop” instead.

My goal is to provide you with accurate translations and high-quality language services at a fair and affordable price. This goal is incompatible with the use of GenAI systems that deliver low-quality output and cause unnecessary extra work.

ChatGPT & Co. have no place in my regular workflows. I don’t pay for subscriptions to these platforms and I don’t expose your confidential data to them.

This does not mean I am generally opposed to technology, and it does not mean I reject all forms of “artificial intelligence” either. But I try to be more conscious of the kinds of tools I use and of their impact on society. Sometimes there are moral gray zones, so I weigh potential harms versus potential benefits. And most importantly, I don’t make false promises regarding the capabilities of “AI” systems.

“AI” features of translation software

When it comes to specific tools for language service providers, things get a bit muddled. As I explain on my MTPE services page, so-called CAT software (Computer-Assisted Translation) has been around for a long time and offers various features that do indeed make translators more efficient.

Unfortunately, the companies behind such tools have mostly succumbed to the “AI” hype as well. RWS tout Trados Studio as “the most advanced AI-powered translation environment for language professionals.” Their competitors at memoQ aren’t as in-your-face with the hype but still offer “AI-based translation automation technology.” The cloud-based Phrase localization platform claims to be “intelligently powered by AI,” while the folks at Lokalise call their product “the world-class AI localization platform.” Sigh.

For now, CAT tools are still useful to me. Eliminating them from my workflow would not be a rational response. Their options for terminology and project management help me work faster and lower my rates for you. But my approach outlined above still applies: I put quality first, and I disable and do not use “AI” features/plug-ins that would expose your confidential content to third parties.

Speaking of confidentiality: Have other providers made you promises along the lines of “we use a DeepL pro subscription so your sensitive content will not be shared with external parties?” You might want to follow up with them on that – because DeepL’s unique selling point is no more.

Further resources

Many of the links on this page will lead you to critical reporting that itself includes links to research insights, studies, and further information. In addition:

- The AI Con by Emily M. Bender and Alex Hanna is a good introductory book for general audiences and provides an overview of the problems with “artificial intelligence” and “big tech.”

- If you have a lot of time on your hands and love to dig deep, Prof. Dagmar Monett curates a non-exhaustive collection of worth-reading books on topics strongly related to Critical AI.

- If you prefer audio/video, this interview with Karen Hao on cult-like AI companies is a must-watch, and the Tech Won’t Save Us podcast is a must-listen.

- And if you want to fill your social media feeds with valuable insights, follow people like the ones just mentioned as well as Abeba Birhane, Casey Fiesler, Timnit Gebru, Jürgen Geuter, Dan McQuillan, Brian Merchant, Margaret Mitchell, Tom Mullaney, and Émile P. Torres. (There are many other important voices in this field, of course, but these experts are a good starting point. They often share others’ work, so you’ll be exposed to a lot of useful knowledge.)